I have been teaching at Outco, a software engineering career accelerator, for a few months now. A few topics keep resurfacing; data infrastructure theory, hands-on practice, and which SQL dialect should I use?

Job markets are seasonal and what is true in this letter, may not be true in a few years.

Books to Learn the Theory

Newsletters like mine may go into real world applications, but when it comes to covering a technology thoroughly; books are hard to beat.

I recommend this Amazon list of books for upping your competency levels in the current job market of Fall 2020. It is not reasonable to expect to read so many pages in a single season, so some of the books further down the list are geared towards preparing for the future of Data Engineering.

This list’s topic ranges from intermediate Python and SQL to advanced Systems Engineering. Each book has a short review blurb attached and progresses in order of difficulty. A lot of the books are unfortunately not user-friendly. A moment that stuck out to me was re-reading a paragraph in Designing Data Intensive Applications about five times before I sort of got it. This newsletter largely exists to combat those moments.

Hands-On Practice

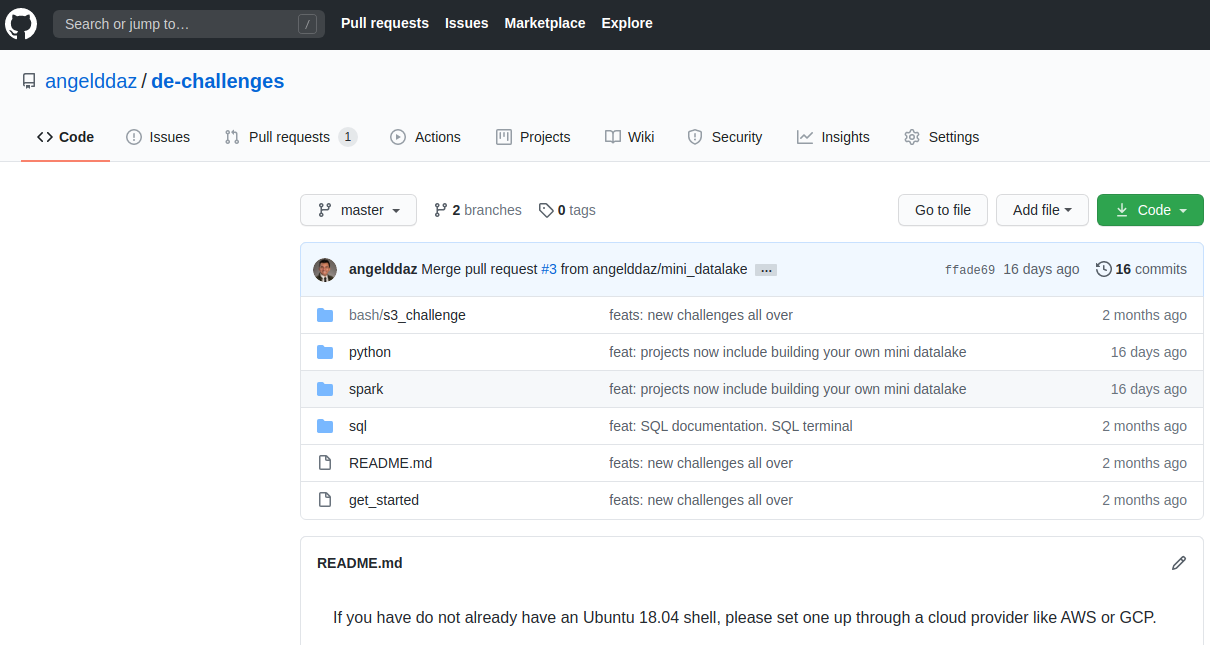

I have been developing a repository which holds a variety of interview questions in README.md documents, organized by folders for each technology. You can go through the different challenges and about 80% are modular, so they can be appended to each other for a main project to showcase in your resume.

This repository assumes introductory level experience with bash/shell, Python, and Databases. The main README document has a screencast Loom video on how to set up an Ubuntu shell, with all the tools you need to take on these challenges, on a cloud instance.

Which SQL Dialect Should I focus on?

PostgreSQL

The industry has sort of settled on realizing that SQL knowledge is 90% transferable across all dialects. However, there isn’t much clarity for budding engineers on which languages to focus on. For the average Data Engineer, I would recommend PostgreSQL.

PostgreSQL and MySQL are the top two open source contenders. Meaning, you won’t need to rely on a company’s checkbook to learn deeply and become a super-user. MySQL is fantastic for distributed database optimization. This is more for Database Administrators who have a different set of challenges, managing transaction databases, rather than the typical Data Sources and Destinations for Data Engineers.

PostgreSQL has two features which stick out to me, favoring it as my dialect of choice for building out Data Transformation Architectures.

Common Table Expressions (CTEs)

CTEs make fundamental data transformation a lot easier because they make queries easier to read and follow the principle of; filter early and often. To read more about these principles, I wrote a letter called “How to Tackle an Intermediate SQL Problem”.

MySQL is a bit more bare bones and does not have CTEs. This bare bones quality to MySQL makes it great for optimization of large systems. For the rest of us, the CTE feature in PostgreSQL makes our lives much easier.

Support for JSON

Non-Relational Databases like MongoDB, or NoSQL, are currently falling by the way-side, slowly. Partly because PostgreSQL supports functions to work with JSON data. Of course, situational factors will always win in these generalizations. However, for the average engineer and average company; PostgreSQL JSON will meet 80% of non-relational data needs.

I will always recommend the path of least resistance to building great products. For Fall 2020, I recommend choosing PostgreSQL as your dialect of choice.

Conclusion

Good luck!

Curated Content

Salary Negotiations must read for job hunters

Yet another Data Quality rant, I mean, technical blog post

But this time it’s with data build tool (dbt)Data Discovery article at Shopify.

”Data Discovery” is analyzing what your data users actually use, and aligning engineering efforts to that reality.PostgreSQL JSON tutorial

About the Author and Newsletter

I automate data processes. I work mostly in Python, SQL, and bash. To schedule a consultancy appointment, contact me through ocelotdata.com.

At Modern Data Infrastructure, I democratize the knowledge it takes to understand an open-source, transparent, and reproducible Data Infrastructure. In my spare time, I collect Lenca pottery, walk my dog, and listen to music.

More at; What is Modern Data Infrastructure.